Simplified DRAW Model for Generative Image Modeling

Implementation of a simplified DRAW-style recurrent variational autoencoder for iterative image reconstruction and generation on Fashion-MNIST.

This work investigates recurrent generative modeling through a simplified implementation of the DRAW (Deep Recurrent Attentive Writer) architecture, applied to the Fashion-MNIST dataset.

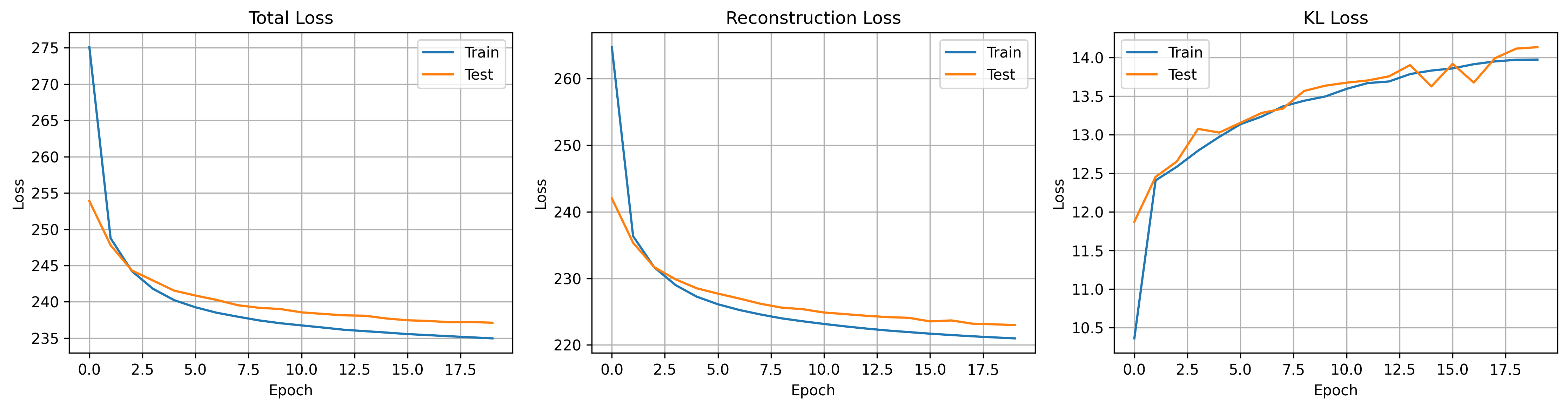

The model is formulated as a recurrent variational autoencoder (VAE) that iteratively refines image reconstructions via an encoder–decoder LSTM architecture and latent variable sampling. The study focuses on understanding structured latent representation learning and the trade-off between reconstruction fidelity and latent regularization under computationally efficient settings.

Model Design

- Encoder–Decoder architecture based on LSTM modules

- Latent variable sampling via reparameterization trick

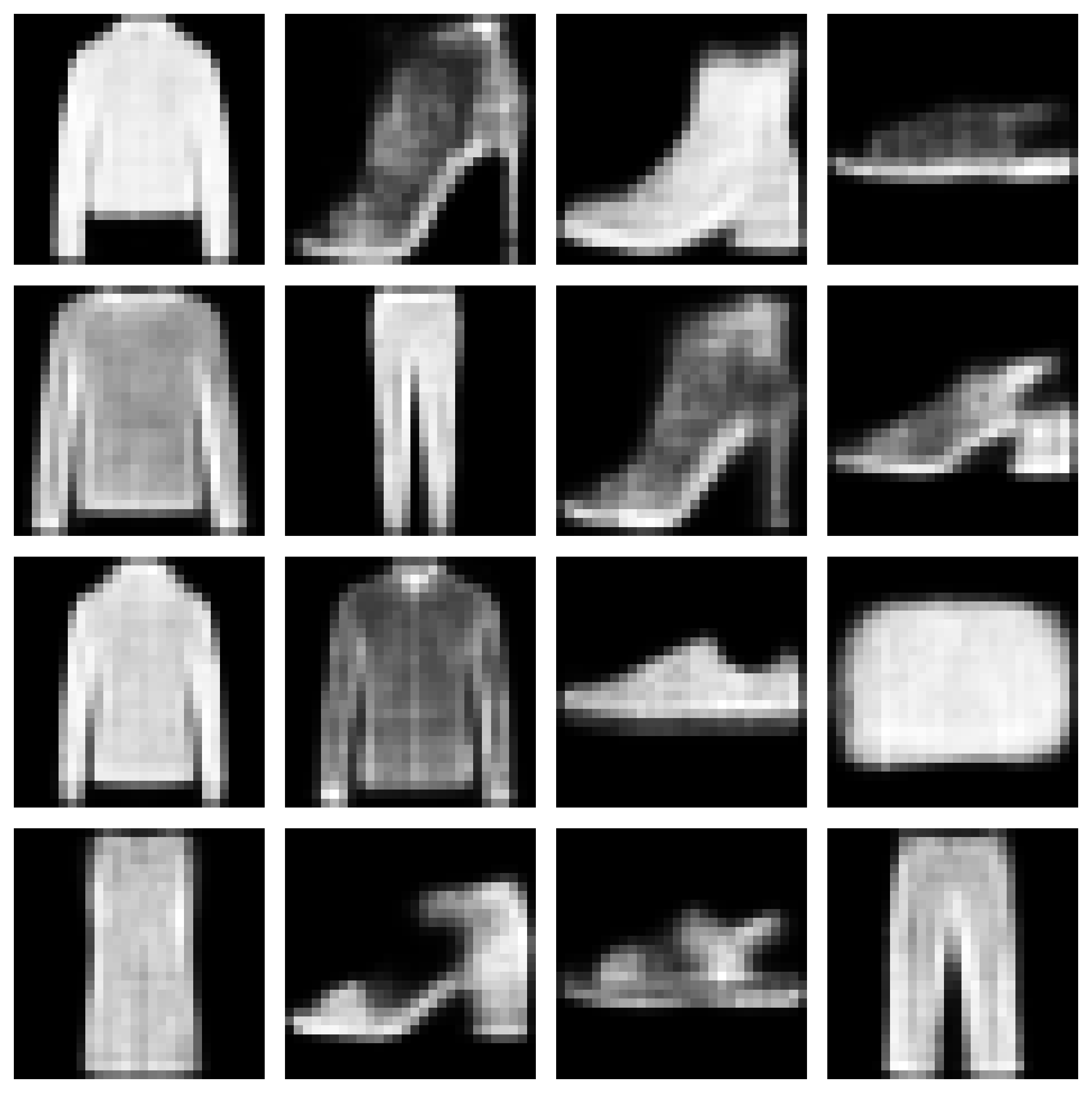

- Iterative canvas refinement for progressive reconstruction

- Simplified variant without attention mechanism for efficiency

Experiments

- Latent dimension study: 8, 16, 32

- KL regularization: β = 0.5, 1.0, 2.0

- Evaluation of reconstruction vs. latent structure trade-off

Results

- Stable convergence with consistent reduction in reconstruction loss

- Learned structured latent representations

- Generated samples capture meaningful Fashion-MNIST patterns

- Expected smoothing artifacts typical of VAE-based models

Key Insights

- Latent dimensionality significantly affects representation capacity

- β-VAE regularization introduces a clear reconstruction–structure trade-off

- Recurrent generative models can learn meaningful structure even without attention

Repository

Full implementation and reproducible experiments:

https://github.com/md-naim-hassan-saykat/draw-fashion-mnist-generative-model