CycleGAN: Horse ↔ Zebra (Unpaired Image Translation)

PyTorch implementation of CycleGAN for unpaired image-to-image translation, with qualitative results and quantitative evaluation using SSIM and PSNR.

This project implements Cycle-Consistent Generative Adversarial Networks (CycleGAN) for unpaired image-to-image translation, focusing on the canonical Horse ↔ Zebra benchmark task. The goal is to learn mappings between two visual domains without requiring paired training data, following the original CycleGAN formulation. The model successfully learns cross-domain mappings without paired supervision, demonstrating strong generalization in unpaired image translation tasks.

The implementation is built in PyTorch and emphasizes reproducibility, training stability, and evaluation beyond qualitative visual inspection, combining qualitative results with quantitative image similarity metrics.

Model Overview

CycleGAN Architecture

- Two generators: GH→Z and GZ→H

- Two discriminators for adversarial training in each domain

- Cycle-consistency loss to enforce structural preservation

- Identity loss to stabilize color and texture mapping

Training Setup

- Unpaired horse and zebra image datasets

- PatchGAN discriminators

- Adam optimizer with learning rate scheduling

- Image buffers to reduce model oscillation

Evaluation & Metrics

- SSIM (Structural Similarity Index) to measure perceptual structure preservation

- PSNR (Peak Signal-to-Noise Ratio) to quantify reconstruction fidelity

- Side-by-side comparison of real, translated, and cycle-reconstructed images

- Visual inspection across multiple test samples

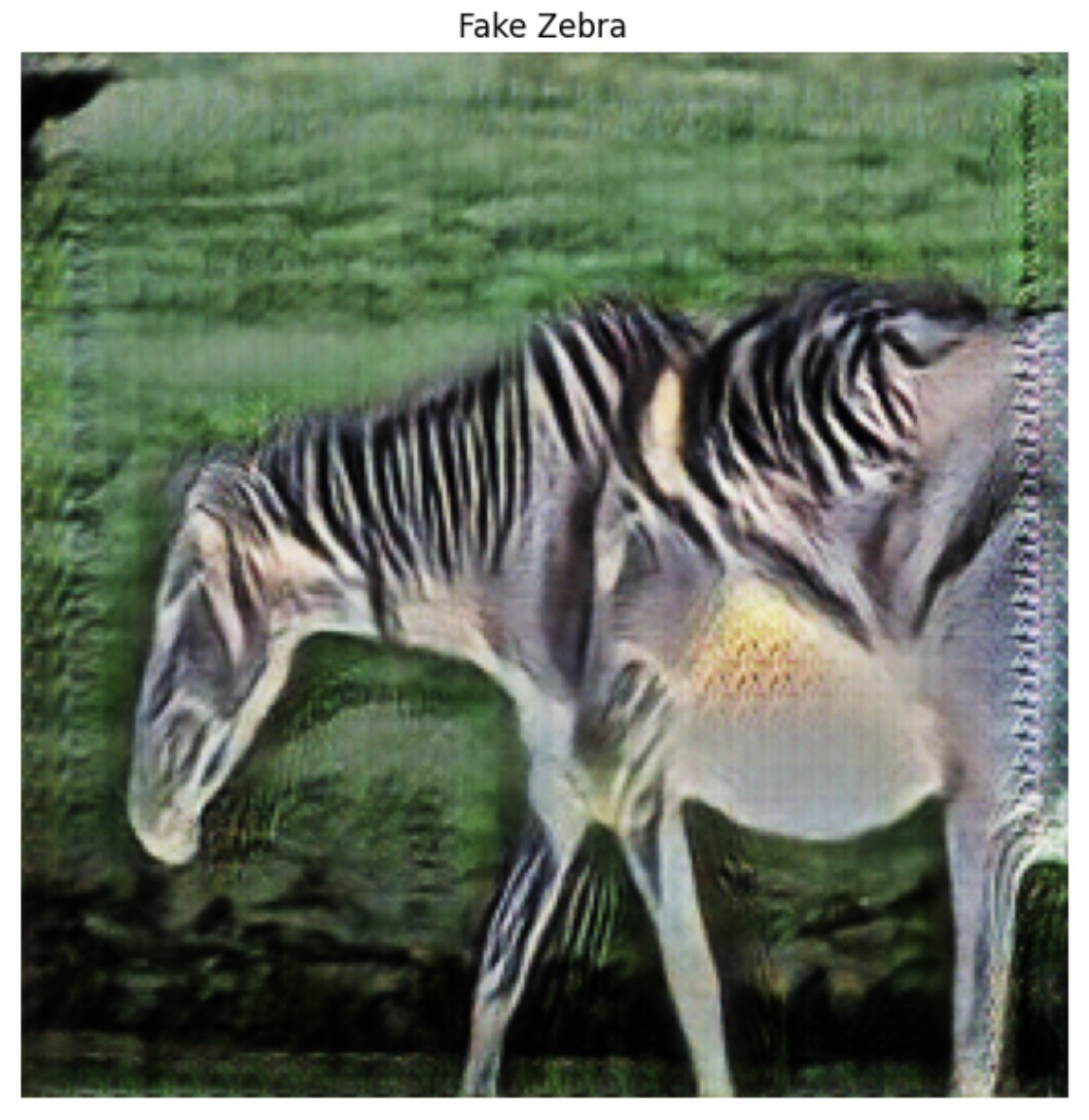

Qualitative Results

Horse → Zebra

Generated samples demonstrate texture transfer and stripe synthesis while preserving underlying object structure, demonstrating effective cycle consistency and domain translation quality.

Repository

The full implementation, training scripts, and result visualizations are available at:

https://github.com/md-naim-hassan-saykat/horse-to-zebra-cyclegan